Unit 66-67-67: Understand theory and applications of 3D

Applications of 3D

There are many different types of uses for 3D not just modelling for games, it can also be used for television and films, one of the most famous uses on TV must be the dragons from Game of Thrones. The team at Pixomondo used modelling software such as ZBrush to design the dragons but also took real life aspects like a chicken that the animators played around with and work out muscle movements. One of the most impressive things i have seen was created in the Unreal Engine 4 is an architectural walk through of a house, its shows just how photo realistic 3D modelling can be.

https://youtu.be/E3LtFrMAvQ4?t=118

https://youtu.be/E3LtFrMAvQ4?t=1183D is also used for animation for anything from television to education.

An application programming interface or API, is used for different things. In Microsoft Direct3D the API renders 3D objects, most graphic cards these days support it due to how much it speeds up 3D rendering withing windows. One of Direct3D's competitors is OpenGL or Open Graphics Library which is a cross platform and language API.

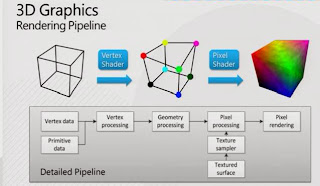

What is a Graphics Pipeline

This is what we use to convert the 3D images in the program to the 2D images we see on our screen so we don't have to render the entire thing when we can't even see it. At the start we have the bus interface which basically tell the entire pipeline what to do. The vertex processing comes next and this gathers the information on where every vertex is on the shape and converts that into a 2D position on the screen, sometimes the vertex is given a colour based on the light hitting it. The parts of the image we cannot see are then remove completely, this is so we do not have to render them when we cannot see them and is called clipping. The vertices are then made into triangles and filling in with pixels to make them whole, these newly filled triangles are called fragments, this is known as the triangle set up and then rasterization. Occlusion culling then removes the pixels that we cannot see due to other objects for example if a rectangle was in front of a square, the pixels behind the rectangle would not be rendered. A value is then assigned to each pixel that was rasterized , this is the parameter interpolation. The pixel shader then determines the final colour and texture of the pixel. The pixel engine then takes the previous 2D object and applies the fragment colours to it to create a whole object on the screen, the thing that stores all of the information throughout this process is known as the frame-buffer controller.

This is what we use to convert the 3D images in the program to the 2D images we see on our screen so we don't have to render the entire thing when we can't even see it. At the start we have the bus interface which basically tell the entire pipeline what to do. The vertex processing comes next and this gathers the information on where every vertex is on the shape and converts that into a 2D position on the screen, sometimes the vertex is given a colour based on the light hitting it. The parts of the image we cannot see are then remove completely, this is so we do not have to render them when we cannot see them and is called clipping. The vertices are then made into triangles and filling in with pixels to make them whole, these newly filled triangles are called fragments, this is known as the triangle set up and then rasterization. Occlusion culling then removes the pixels that we cannot see due to other objects for example if a rectangle was in front of a square, the pixels behind the rectangle would not be rendered. A value is then assigned to each pixel that was rasterized , this is the parameter interpolation. The pixel shader then determines the final colour and texture of the pixel. The pixel engine then takes the previous 2D object and applies the fragment colours to it to create a whole object on the screen, the thing that stores all of the information throughout this process is known as the frame-buffer controller. |

| Ray Tracing |

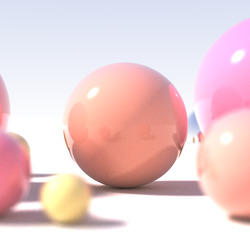

As you can see radiosity has been applied onto the second image as it is diffusing the light correctly whereas direct illumination has occurred on the first image. However unlike ray tracing specular reflection has been ignored.

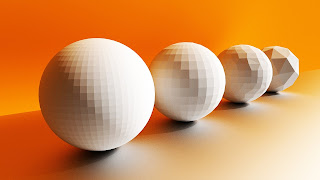

There are several types of lighting as well indirect light is where the light is reflected off of certain surfaces in the room and tries to mimic how it would look in a real life situation. For example light bouncing off of a mirror. Local illumination is where the light is coming directly from a light source, such as the sun that just shines right down on us. To help the performance of your PC when rendering you don't always have to render the entire model for example in the picture below the sphere is less detailed based on how close it is to the camera. This can be set in Maya by using groups.

There are several types of lighting as well indirect light is where the light is reflected off of certain surfaces in the room and tries to mimic how it would look in a real life situation. For example light bouncing off of a mirror. Local illumination is where the light is coming directly from a light source, such as the sun that just shines right down on us. To help the performance of your PC when rendering you don't always have to render the entire model for example in the picture below the sphere is less detailed based on how close it is to the camera. This can be set in Maya by using groups.Geometric Theory

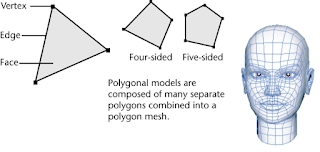

All shapes will have certain aspects to them the main ones for modelling in programs like Maya are the vertices which act as the corners of the shape; the edges, which serve to join the vertices; and the faces that fill in the gaps between them. There are also different application for these for example the edges do not have to be straight they can curve. All of these put together make a polygon which we can them manipulate into whatever shape we need for our game, however only polygons that have been triangulated may be used this way. NURBS take up less data however are extremely heavy for your computer to run in video games since the shape is defined by mathematics. They are however used in cut scenes that have been pre-rendered. Primitive objects are mainly used to start off a design you can the move, rotate and scale the shape by using the coordinates given in most 3D modelling programs, X Y and Z. X being the horizontal, Y the vertical and Z the depth.

All shapes will have certain aspects to them the main ones for modelling in programs like Maya are the vertices which act as the corners of the shape; the edges, which serve to join the vertices; and the faces that fill in the gaps between them. There are also different application for these for example the edges do not have to be straight they can curve. All of these put together make a polygon which we can them manipulate into whatever shape we need for our game, however only polygons that have been triangulated may be used this way. NURBS take up less data however are extremely heavy for your computer to run in video games since the shape is defined by mathematics. They are however used in cut scenes that have been pre-rendered. Primitive objects are mainly used to start off a design you can the move, rotate and scale the shape by using the coordinates given in most 3D modelling programs, X Y and Z. X being the horizontal, Y the vertical and Z the depth.

There are several types of modelling the first of which being box modelling, where you use a primitive object to make the rough shape of the model you want and then sculpt more detail on top of it. Another type is box modelling this is where you import a front and side view into Maya and use shapes the trace the outline and end up with a 3D model.

No comments:

Post a Comment